Member-only story

“Let’s Verify, Step by Step”: OpenAI’s Process Supervision Training to Overcome Hallucinations?

In recent years, advancements in Large Language Models(LLMs for short) have greatly improved the ability to perform complex, multi-step reasoning and Chain of Thoughts. Yet, these models still regularly produce logical mistakes, referred to as Hallucinations.

OpenAI is seeking to overcome this by emphasizing the Chain of Thoughts where the model thinks step by step rather than returning the results immediately.

The idea is to introduce a supervision method that focuses on optimizing the thinking steps rather than the final outcome.

OpenAI calls this method “Process Supervision” and, in the paper, compares it to “Outcome Supervision”.

The premise of Outcome Supervision is to provide feedback only at the end of the reasoning chain, i.e., the final output of the model. Process supervision, on the other hand, provides feedback at every intermediate step of reasoning.

All this is discussed in detail in OpenAI’s latest paper: “Let’s verify, step by step”, which we will explain in this blog.

Prerequisites

Chain Of Thoughts in Large Language Models

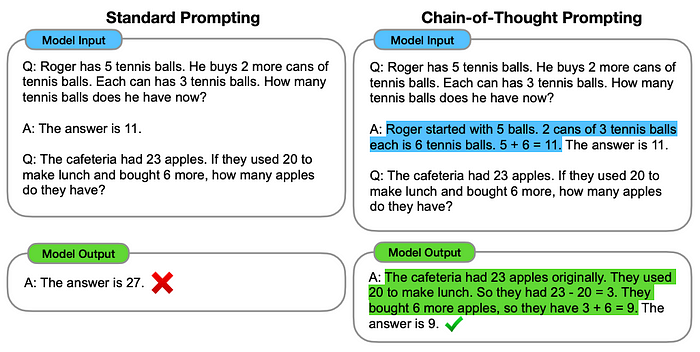

Before delving into Process Supervision, I would like to introduce the concept of Chain Of Thoughts.

Large Language Models, are not only able to give answers to instructions but also give intermediate reasoning steps in natural Prerequisites

Chain Of Thoughts in Large Language Models

Before delving into Process Supervision, I would like to introduce the concept of Chain Of Thoughts.

Large Language Models, are not only able to give answers to instructions but also give intermediate reasoning steps in natural language when prompted.

The paper “Chain-of-Thought Prompting Elicits Reasoning in Large Language Models” suggests that prompting a LLM with a few-shot examples to generate reasoning before giving the final answer, achieves state-of-the-art accuracy on the GSM8K benchmark of math word problems.